Ret = client.execute(queries=], num_workers=1) wait a minute, no they didn’t… they did not take 100 additional queries with a single worker separate from the queries they used for throughput and generate “p” latencies in the most idiotic way possible.

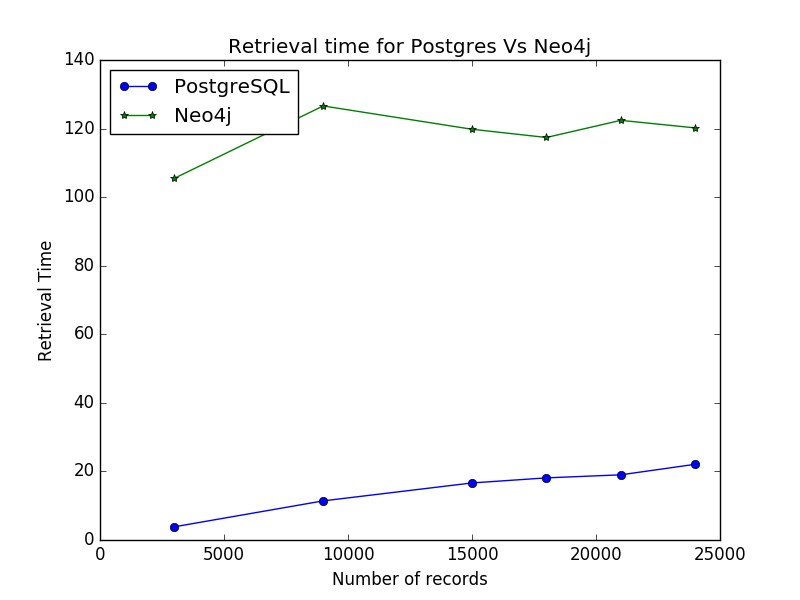

However, the CPUs of both systems were not at 100% meaning they were not fully utilized. They do a little division and come up with 112 requests per second for Neo4j and 175 for Memgraph: In a proper benchmark you would run each and every query for at least 60 seconds, preferably more and then compare. Why would you execute the query for different amount of times or different durations of time? That makes no sense. Neo4j executed the query 195 times, taking 1.75 seconds and Memgraph 183 times taking 1.04 seconds. On the left we have Neo4j, on the right we have Memgraph. Let’s take a look at the raw data for the first query : Those are not “graphy” queries at all, why are they in a graph database benchmark? Ok, whatever. Q4: MATCH (n) RETURN min(n.age), max(n.age), avg(n.age) Q3: MATCH (n:User) WHERE n.age >= 18 RETURN n.age, COUNT(*) Q2: MATCH (n) RETURN count(n), count(n.age) Q1: MATCH (n:User) RETURN n.age, COUNT(*) Has anyone ever tried testing the performance of a sports car with a “cold engine”? No, because that’s stupid, so we won’t do that here. Well use the medium dataset (which already takes hours to import the way they set it up) from a “hot engine”. I’ll stick with Ubuntu 22.04 with Linux kernel 6.0.5. Debian 4.19… uh… they can’t mean that, they probably mean Debian 10 with Linux kernel 4.19. I’ll just use my gaming pc with an Intel® Core™ i7-10700K CPU 3.80GHz × 8 cores. I would go on ebay and buy a refurbished one for $50 bucks, but I don’t have a rack to put it in and no guarantee it won’t catch on fire the second I turn it on. Ok, what’s their hardware for the benchmark results?Ī G6? I’m feeling so fly like a G6. Why would they do this? I keep forgetting… because they don’t actually want anybody trying this. So instead of the import taking 2 minutes, it takes hours.

Not batches of transactions… but rather painful, individual, one at a time transactions one point 8 million times. They decided to provide the data not in a CSV file like a normal human being would, but instead in a giant cypher file performing individual transactions for each node and each relationship created. Why doesn’t everybody just do that instead of creating their own weird thing that is probably full of problems? Oh right, because everyone produces bullshit benchmarks. An industry standard tool to test performance. So I decided to just do a simple project using Gatling. Why would they do this? Because it’s a bullshit benchmark and they don’t actually want anybody looking too deeply at it.

Which means the other database vendors can’t actually use this anyway. IANAL, but that sounds like you aren’t allowed to use their benchmark code if you provide a competing solution. using the Licensed Work to create a work or solution which competes (or might reasonably be expected to compete) with the Licensed Work. “Authorised Purpose” means any of the following, provided always that (a) you do not embed or otherwise distribute the Licensed Work to third parties and (b) you do not provide third parties direct access to operate or control the Licensed Work as a standalone solution or service:ģ. Worse still, the work is under a “Business Source License” which states: So let’s tear into it.Īt first I considered replicating it using their own repository, but it’s about 2000 lines of Python and I don’t know Python. Instead these clowns put it on a banner on top of their home page. How is the Graph Database category supposed to grow when vendors keep spouting off complete bullshit? I wrote a bit about the ridiculous benchmark Memgraph published last month hoping they would do the right thing and make an attempt at a real analysis.

Hey HackerNews, let me just drop my mixtape, checkout my soundcloud and “Death Row” is the label that pays me.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed